|

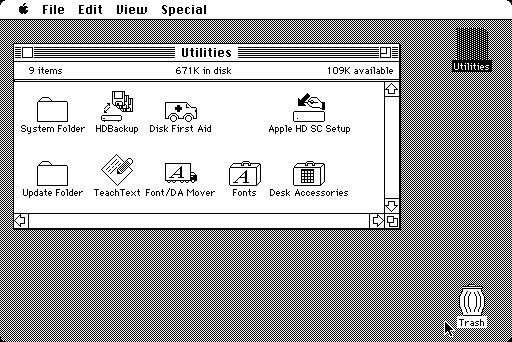

The cartridge has two ROM sockets for either 64K or 128K ROMs and is actually signed internally by the designers.# ZFS and DTrace update lands in NetBSDEmulators: BasiliskII, MinivMac Mini vMac emulates the 68K processor macs (older software) e.g. It also has a connector to attach an Apple Mac 800K disk drive. We Have The Largest Collection of NDS Emulator Games.'The A-Max is an Apple Macintosh emulator is a cartridge which plugs into the Amigas floppy drive port and includes a pass-thru for the Amigas native drives. OpenZFS and DTrace updates in NetBSD, NetBSD network security stack audit, Performance of MySQL on ZFS, OpenSMTP results from p2k18, legacy Windows backup to FreeNAS, ZFS block size importance, and NetBSD as router on a stick.Nintendo DS ROMs (NDS ROMs) Available to Download and Play Free on Android, PC, Mac and iOS Devices. IOS 7 or later ROM image from Mac Plus, Mac II and/or Mac 128K Disk images with Mac software Xcode 7 or 8. Emulates Mac Plus, Mac II or Mac 128K Full simulated keyboard (including all Mac keys) Full sound output Uses external keyboard and mouse if available Regulable emulation speed Easy(ish) to import/export disk images Requirements.dtrace FBT probes can now be placed in kernel modules. r315983 is from March 2017 (14 months ago), so there is still more work to doIn addition to the 10 years of improvements from upstream, this version also has these NetBSD-specific enhancements: This changes the upstream vendor from OpenSolaris to FreeBSD, and this version is based on FreeBSD svn r315983. Macintosh Classic, Macintosh II.Merge a new version of the CDDL dtrace and ZFS code.

128K Emulator Mac Mini VMacThis was done in several layers of the NetBSD kernel, from device drivers to L4 handlers.In the course of investigating several bugs discovered in NetBSD, I happened to look at the network stacks of other operating systems, to see whether they had already fixed the issues, and if so how. Dozens of bugs were fixed, among which a good number of actual, remotely-triggerable vulnerabilities.Changes were made to strengthen the networking subsystems and improve code quality: reinforce the mbuf API, add many KASSERTs to enforce assumptions, simplify packet handling, and verify compliance with RFCs. Maxime Villard has been working on an audit of the NetBSD network stack, a project sponsored by The NetBSD Foundation, which has served all users of BSD-derived operating systems.Over the last five months, hundreds of patches were committed to the source tree as a result of this work. In the initial PF code a particular macro was used as an alias to a number. Returning IPPROTO_NONE was entirely wrong: it caused the kernel to keep iterating on the IPv6 packet chain, while the packet storage was already freed.The PF Signedness Bug: A bug was found in NetBSD’s implementation of the PF firewall, that did not affect the other BSDs. In addition this flaw allowed a limited buffer overflow - where the data being written was however not controllable by the attacker.The IPPROTO Typo: While looking at the IPv6 Multicast code, I stumbled across a pretty simple yet pretty bad mistake: at one point the Pim6 entry point would return IPPROTO_NONE instead of IPPROTO_DONE. As a result, a specially-crafted IPv6 packet could trigger an infinite loop in the kernel (making it unresponsive). This allowed at least a pretty bad remote DoS/CrashThe IPsec Infinite Loop: When receiving an IPv6-AH packet, the IPsec entry point was not correctly computing the length of the IPv6 suboptions, and this, before authentication. A lot of code is shared between the BSDs, so it is especially helpful when one finds a bug, to check the other BSDs and share the fix.The IPv6 Buffer Overflow: The overflow allowed an attacker to write one byte of packet-controlled data into ‘packet_storage+off’, where ‘off’ could be approximately controlled too. Mac os 9 emulator no downloadMe perhaps, later this year? We’ll see.This security audit of NetBSD’s network stack is sponsored by The NetBSD Foundation, and serves all users of BSD-derived operating systems. A todo list will be left when the project end date is reached, for someone else to pick up. This flag is supposed to indicate that a given mbuf is the head of the chain it forms having the flag on secondary mbufs was suspicious.What Now: Not all protocols and layers of the network stack were verified, because of time constraints, and also because of unexpected events: the recent x86 CPU bugs, which I was the only one able to fix promptly. This could cause NPF to look for the L4 payload at the wrong offset within the packet, and it allowed an attacker to bypass any L4 filtering rule on IPv6.The IPsec Fragment Attack: I noticed some time ago that when reassembling fragments (in either IPv4 or IPv6), the kernel was not removing the M_PKTHDR flag on the secondary mbufs in mbuf chains. NetBSD replaced the macro with a sizeof(), which returns an unsigned result.The NPF Integer Overflow: An integer overflow could be triggered in NPF, when parsing an IPv6 packet with large options. Directions to install ram for mac miniFor the other ZFS settings, I used what can be found in my earlier ZFS posts but with the ARC size limited to 1GB. The dataset generated by sysbench is not very compressible, so I used lz4 compression in ZFS. I ended up with 330 tables for a total size of about 850GB.

If we continue the comparison with InnoDB, it would be like running with a buffer pool too small to contain the non-leaf pages. Essentially, in the above benchmark, the ARC is too small to contain all the internal blocks of the table files’ B-trees. These internal blocks are labeled as metadata. With no cache, to read something from a three levels B-tree thus requires 3 IOPS.The extra IOPS performed by ZFS are needed to access those internal blocks in the B-trees of the files. To access a piece of data in a B-tree, you need to access the top level page (often called root node) and then one block per level down to a leaf-node containing the data. ZFS is much less affected by the file level fragmentation, especially for point access type.ZFS stores the files in B-trees in a very similar fashion as InnoDB stores data. Second, you can try to evaluate it by looking at the ZFS internal data. First, you can guess values for the ARC size and experiment. So only one InnoDB page, a leaf page, needed to be read per point-select statement.To correctly set the ARC size to cache the metadata, you have two choices.

A larger InnoDB page size would increase the CPU load for decompression on an instance with only two vCPUs not great either. At best, the ZFS performance would only match XFS. Use a larger Innodb page size like 64KBI was reluctant to grow the ARC to 7GB, which was nearly half the overall system memory.

Using the ephemeral device of an i3.large instance for the ZFS L2ARC, ZFS outperformed XFS by 66%.OpenBSD #p2k18 hackathon took place at Epitech in Nantes.I was organizing the hackathon but managed to make progress on OpenSMTPD.As mentioned at EuroBSDCon the one-line per rule config format was a design error.A new configuration grammar is almost ready and the underlying structures are simplified.Refactor removes ~750 lines of code and solves _many_ issues that were side-effects of the design error.New features are going to be unlocked thanks to this.OpenSMTPD started ten years ago out of dissatisfaction with other solutions, mainly because I considered them way too complex for me not to get things wrong from time to time. ZFS allows you to optimize the use of EBS volumes, both in term of IOPS and size when the instance has fast ephemeral storage devices. When properly cached, the performance of ZFS is excellent.

0 Comments

Leave a Reply. |

AuthorSarah ArchivesCategories |

RSS Feed

RSS Feed